Machine Learning relies heavily on data to train models and make accurate predictions. However, in many real-world situations, collecting large datasets can be expensive, time-consuming, or sometimes impossible. This is where Bootstrapping becomes extremely useful.

Bootstrapping in machine learning is a powerful statistical technique that helps machine learning practitioners estimate the accuracy, stability, and reliability of models using limited data. It allows data scientists to create multiple datasets from a single dataset and analyze how models behave across these variations.

In this article, we will explore what bootstrapping in machine learning is, how it works, its importance, advantages, limitations, and real-world applications.

Understanding Bootstrapping

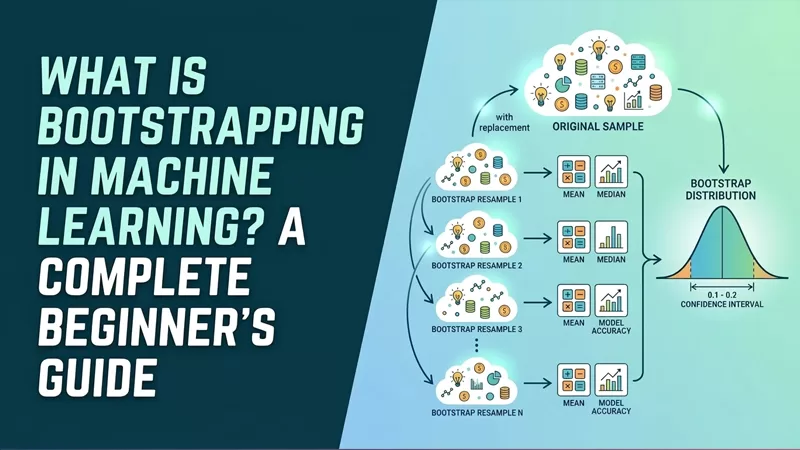

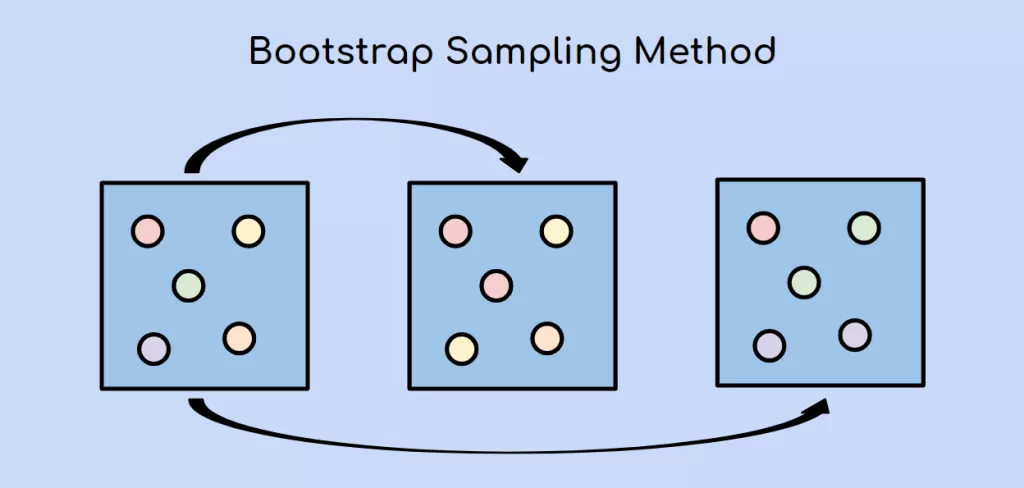

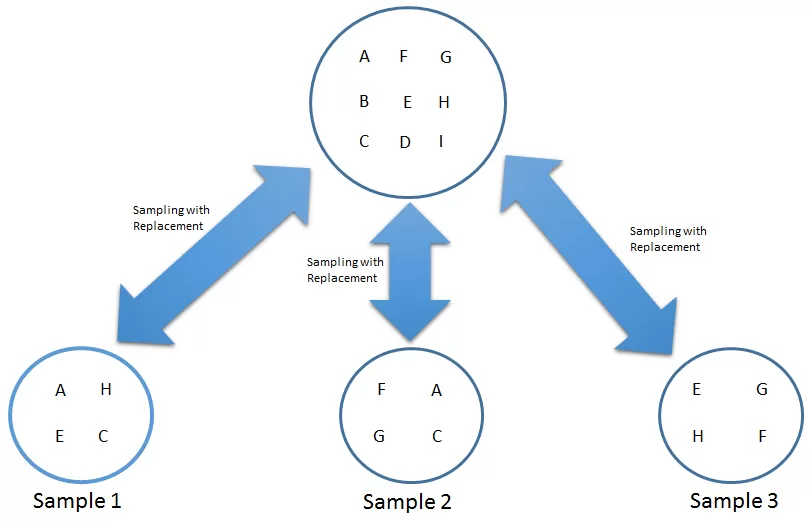

Bootstrapping is a resampling technique used in statistics and machine learning to estimate properties of a dataset by repeatedly sampling from it with replacement.

In simple terms, bootstrapping creates multiple new datasets from the original dataset by randomly selecting data points. Since sampling is done with replacement, the same data point can appear multiple times in a new dataset.

These generated datasets are called bootstrap samples.

Machine learning models can then be trained on these samples to estimate metrics like:

- Model accuracy

- Bias

- Variance

- Confidence intervals

This method is particularly useful when the available dataset is small.

Why Bootstrapping is Important in Machine Learning

bootstrapping in machine learning helps data scientists understand how reliable their models are. Instead of relying on a single dataset, bootstrapping allows the model to be evaluated on multiple variations of the same dataset.

Key reasons bootstrapping is important include:

1. Works with small datasets

When data is limited, bootstrapping helps simulate multiple datasets for training and evaluation.

2. Estimates model uncertainty

Bootstrapping helps estimate the variability of model predictions.

3. Improves model robustness

By training models on different bootstrap samples, we can build more stable models.

4. Foundation of Ensemble Learning

Bootstrapping plays a crucial role in ensemble algorithms like Bagging and Random Forest.

How Bootstrapping Works

The bootstrapping process follows a simple sequence of steps:

Step 1: Start with the Original Dataset

Suppose we have a dataset with N observations.

Example:

| ID | Value |

|---|---|

| 1 | A |

| 2 | B |

| 3 | C |

| 4 | D |

Step 2: Random Sampling with Replacement

Randomly select samples from the dataset with replacement.

Example bootstrap sample:

| ID | Value |

|---|---|

| 2 | B |

| 4 | D |

| 2 | B |

| 1 | A |

Notice that B appears twice while C is missing.

Step 3: Repeat the Process

Generate many bootstrap samples (e.g., 1000 samples).

Each sample is used to train a model.

Step 4: Evaluate the Model

The results from all bootstrap models are aggregated to estimate:

- Prediction accuracy

- Confidence intervals

- Model variance

Example of Bootstrapping in Machine Learning

Imagine we are building a house price prediction model.

Dataset size: 100 records

Using bootstrapping:

- Create 1000 bootstrap samples from the dataset

- Train a model on each sample

- Evaluate predictions

- Calculate the average accuracy

This gives a more reliable estimate of how the model performs on unseen data.

Bootstrapping vs Cross-Validation

Many beginners confuse bootstrapping with cross-validation. While both are resampling techniques, they are different.

| Feature | Bootstrapping | Cross-Validation |

|---|---|---|

| Sampling | With replacement | Without replacement |

| Dataset Size | Same size as original | Split into folds |

| Primary Purpose | Estimate statistics | Model evaluation |

| Usage | Ensemble learning | Model validation |

Both techniques help evaluate machine learning models, but bootstrapping focuses more on estimating uncertainty and variance.

Advantages of Bootstrapping

Bootstrapping offers several benefits in machine learning and statistics.

1. Works with Small Datasets

Even with limited data, bootstrapping allows the creation of multiple training datasets.

2. Simple and Flexible

It does not require complex mathematical assumptions.

3. Useful for Estimating Confidence Intervals

Bootstrapping helps estimate confidence intervals for model parameters.

4. Improves Model Stability

Training models on multiple bootstrap samples reduces variance.

5. Supports Ensemble Techniques

Algorithms like Bagging rely on bootstrapping.

Limitations of Bootstrapping

Despite its advantages, bootstrapping has some limitations.

1. Computationally Expensive

Generating thousands of bootstrap samples can require significant computing power.

2. Not Ideal for Extremely Small Datasets

If the dataset is too small, repeated sampling may not add much new information.

3. May Introduce Bias

Bootstrapping assumes the sample represents the population well.

If the original dataset is biased, bootstrap results may also be biased.

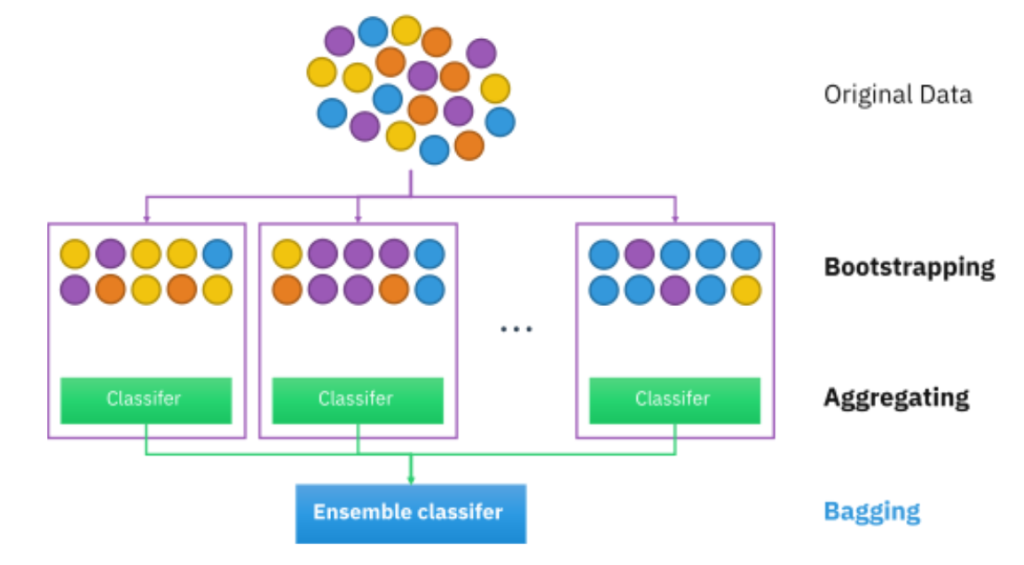

Bootstrapping in Ensemble Learning

Bootstrapping plays a key role in ensemble machine learning methods.

Bagging (Bootstrap Aggregating)

Bagging is an ensemble technique where multiple models are trained on different bootstrap samples.

Steps:

- Generate bootstrap samples

- Train models on each sample

- Combine predictions

This approach reduces variance and overfitting.

Random Forest

Random Forest is a popular algorithm that uses bootstrapping + decision trees.

Each tree:

- Trains on a bootstrap sample

- Uses a random subset of features

The final prediction is obtained by averaging predictions across trees.

This leads to higher accuracy and better generalization.

Real-World Applications of Bootstrapping

Bootstrapping is widely used across industries.

Finance

Estimating risk and investment returns.

Healthcare

Analyzing clinical trial data and model reliability.

Machine Learning Research

Evaluating model performance and uncertainty.

Marketing Analytics

Understanding customer behavior patterns.

Data Science

Creating reliable statistical estimates.

Bootstrapping in Python (Simple Example)

Below is a basic example of bootstrapping using Python.

import numpy as npdata = np.array([5, 7, 9, 10, 12])bootstrap_samples = []for i in range(1000):

sample = np.random.choice(data, size=len(data), replace=True)

bootstrap_samples.append(np.mean(sample))print("Estimated Mean:", np.mean(bootstrap_samples))

This code:

- Creates bootstrap samples

- Calculates the mean for each sample

- Estimates the overall mean

When Should You Use Bootstrapping?

Bootstrapping is useful when:

- Dataset size is limited

- You want to estimate confidence intervals

- Evaluating model uncertainty

- Building ensemble learning models

- Performing statistical inference

It is especially valuable in machine learning experimentation and research.

Conclusion

bootstrapping in machine learning is a powerful and widely used resampling technique in machine learning and statistics. By repeatedly sampling from the original dataset with replacement, bootstrapping allows data scientists to estimate model performance, variability, and reliability.

This method is especially useful when working with small datasets and forms the foundation for powerful ensemble methods such as Bagging and Random Forest. Despite some computational costs, bootstrapping remains an essential tool for building robust and reliable machine learning models.

As machine learning continues to evolve, techniques likebootstrapping in machine learning will remain crucial for ensuring models are accurate, stable, and trustworthy.

Kaashiv Infotech Offers, Full Stack Python Course, Data Science Course, & More, visit their website www.kaashivinfotech.com.