What is Critical Section in Operating systems (OS) are responsible for controlling and coordinating hardware and software resources on a computer. One of the major functions of an OS is process management, which includes multitasking — running multiple processes or threads concurrently. In a multitasking environment, synchronization becomes crucial to ensure processes don’t interfere with each other while sharing data or resources.

The concept of the Critical Section is central to understanding how operating systems manage shared resources. In this article, we will explore what a Critical Section is, why it matters, the problems it introduces, and how operating systems and programmers solve these problems.

What Is Critical Section?

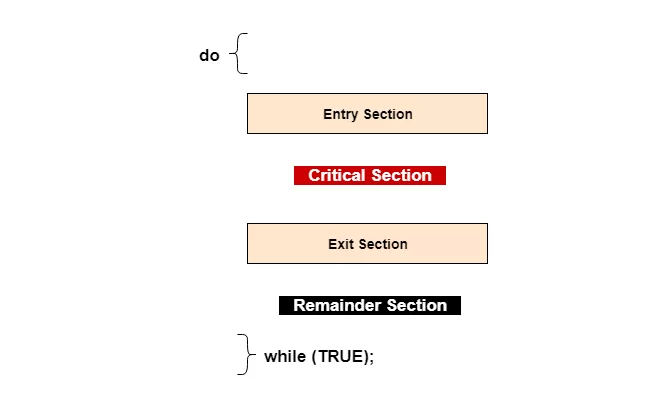

A Critical Section (CS) is a part of a program where shared resources (like variables, memory, files, or devices) are accessed and possibly modified. When multiple processes or threads try to execute their critical section at the same time, race conditions may occur, leading to inconsistent or incorrect results.

Example Scenario

Imagine two threads trying to update the same bank account balance at the same time.

Let’s say:

- Thread A wants to deposit $100.

- Thread B wants to withdraw $50.

- The balance is initially $1000.

Both read the balance at the same time — both see $1000. Now:

- Thread A sets balance to $1100.

- Thread B sets balance to $950.

The final result becomes inconsistent because both threads read the same value before writing, causing a data conflict. This makes the update unreliable.

This part of the program where the balance is read and written is the critical section because accessing or modifying that shared balance must be done in a safe, controlled way.

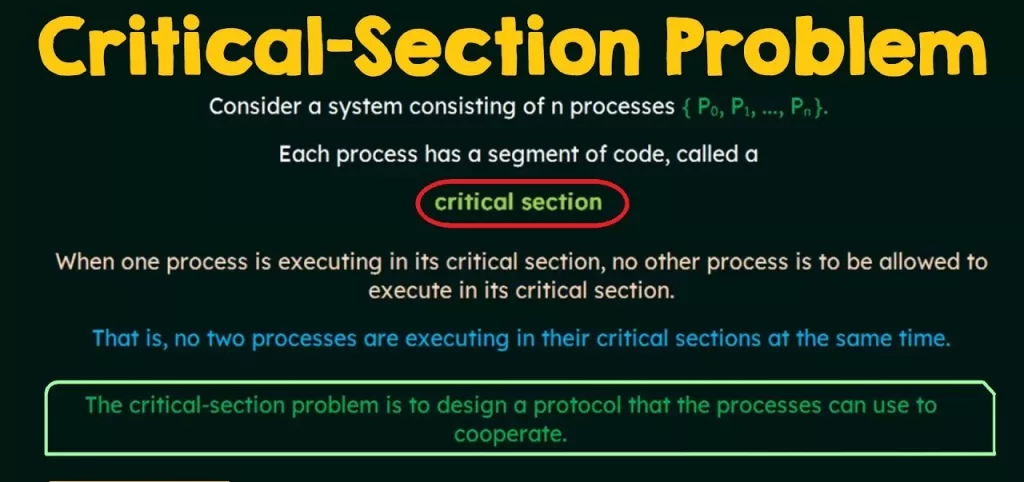

The Critical Section Problem

The Critical Section Problem (CSP) is the problem of designing a protocol that allows processes to enter their critical section in such a way that:

- Only one process enters the critical section at a time

This ensures mutual exclusion — no two processes share execution inside the critical section simultaneously. - No process experiences starvation

Every process that wants to enter its critical section eventually gets a chance. - Processes outside the critical section are not unnecessarily delayed

If no process wants to enter the critical section, others shouldn’t be blocked. - The solution should work in all cases

It should handle all possible sequences of process executions, including interrupts, scheduling changes, and hardware concurrency.

In simple terms, the critical section problem asks:

How can an operating system allow multiple competing processes to access shared data or resources safely without interfering with each other?

Why the Critical Section Problem Matters

In real computing environments:

- Multiple users run applications simultaneously.

- Devices generate interrupts that need immediate attention.

- Threads work in parallel to improve performance.

In these situations, multiple units of execution (threads/processes) may need to share:

- Global variables

- Shared memory

- Hardware (printers, network cards)

- Files

Without correct handling:

✔ Data corruption

✔ Unpredictable program behavior

✔ System crashes

✔ Deadlocks

✔ Lost updates

…are likely to occur.

Therefore, handling the critical section problem is essential in operating systems, embedded systems, distributed systems, and even application-level multithreaded programs.

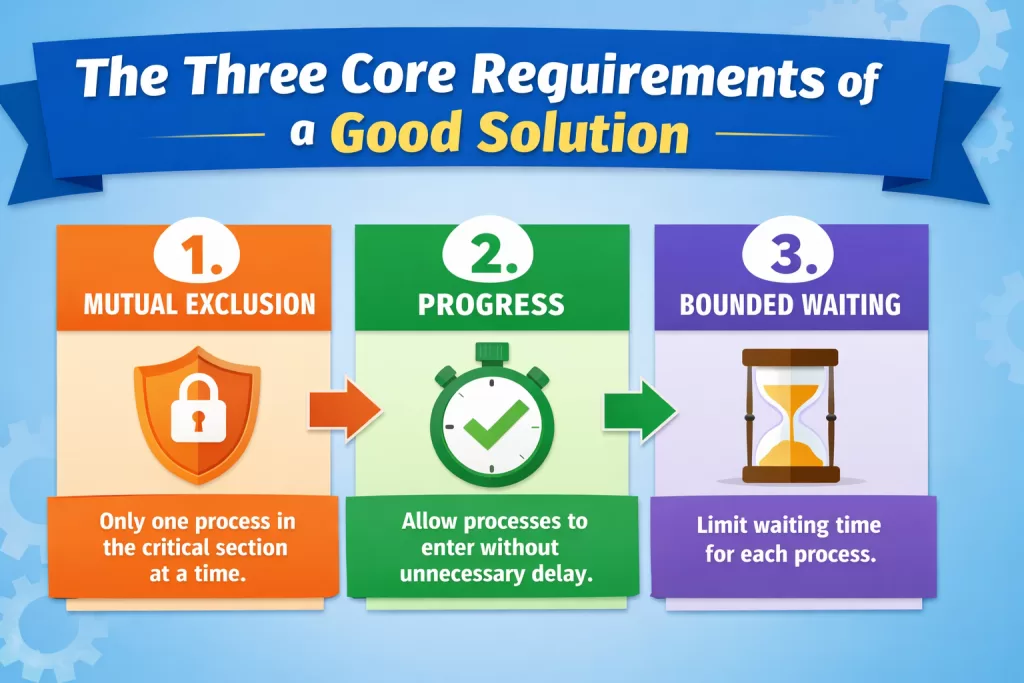

The Three Core Requirements of a Good Solution

To solve the critical section problem effectively, any solution must satisfy:

1. Mutual Exclusion

Only one process can be inside its critical section at a time.

If Process A is in the critical section, any others trying to access it must wait.

2. Progress

If no process is inside the critical section and a process wants to enter, the system must allow it to do so without unnecessary delay.

In other words, the system must make progress and not keep processes waiting forever when it’s safe to enter.

3. Bounded Waiting

Each process must enter the critical section after a finite waiting period. No process should wait indefinitely (no starvation).

Approaches to Solving the Critical Section Problem

Over time, researchers and designers have developed multiple techniques to enforce mutual exclusion and solve the critical section problem.

Below are the most commonly used approaches:

1. Disabling Interrupts (Hardware Level)

In a uniprocessor system, disabling interrupts can prevent context switches.

- When a process enters its critical section, it disables interrupts.

- While interrupts are disabled, no other process can run.

- After it exits, interrupts are reenabled.

⚠ Disadvantage: Not practical for multiprocessor systems or complex OS due to performance and feasibility issues.

2. Peterson’s Solution (Software Level)

One of the earliest software-only solutions. It uses two shared variables:

flag[i]— indicates if process i wants to enter critical section.turn— indicates whose turn it is.

Each process sets its flag and yields turn to the other, then busy-waits until it’s safe to enter.

✅ Works for two processes

❌ Not scalable to many processes, and busy-waiting wastes CPU.

3. Busy Waiting (Spinlocks)

A process repeatedly checks a condition to see if it can enter the critical section.

while (lock == 1) {

// busy wait

}

lock = 1;

// critical section

lock = 0;

This works but wastes CPU cycles — the process “spins” doing nothing useful while waiting.

4. Lock Mechanisms

Most modern OS and programming languages provide locks:

- Mutex (Mutual Exclusion Lock)

Only one thread can hold a mutex at a time. - Semaphore

A counter protecting shared resources. - Binary Semaphore

Similar to a mutex with values 0 and 1.

Mutex Example (Pseudo Code):

mutex_lock(&m);

// critical section

mutex_unlock(&m);

5. Atomic Operations

Atomic CPU instructions (like Test-and-Set, Compare-and-Swap) help implement lock mechanisms without race conditions.

Example:

while (test_and_set(&lock)) {

// wait

}

6. Monitors and Condition Variables

Higher-level concepts in modern languages like Java:

synchronized void increment() {

counter++;

}

Here, the language runtime ensures mutual exclusion through synchronized blocks.

Problems and Challenges

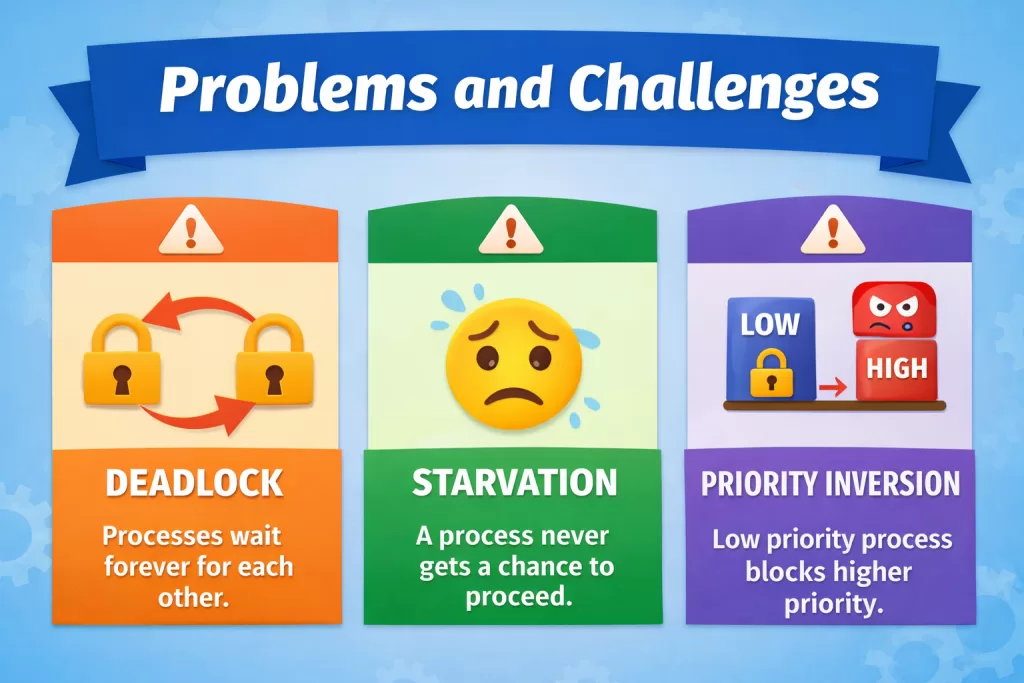

Even with good solutions, the critical section introduces other issues:

Deadlock

Occurs when two or more processes wait forever for each other to release resources.

Example:

Process 1 holds lock A and waits for lock B.

Process 2 holds lock B and waits for lock A.

→ No progress.

Starvation

When a process never gets the chance to enter its critical section.

Example: A high-priority thread always preempts a low-priority one.

Priority Inversion

A lower priority process holds a lock needed by a high-priority one — causing the high priority to wait.

To fix this, systems use priority inheritance.

Real-World Use Cases

Critical section concepts are used in:

✔ Operating System Kernel

✔ Database Management Systems

✔ Web Servers

✔ Embedded Systems

✔ Multithreaded Applications

✔ Real-Time Control Systems

For instance, when multiple threads handle banking transactions, web requests, or sensor inputs simultaneously, protecting shared data is vital.

Conclusion

The Critical Section is the portion of code where shared resources are accessed concurrently. The Critical Section Problem deals with ensuring safe access to these shared regions without conflicts, data corruption, deadlocks, or starvation.

Understanding and solving this problem is fundamental to designing robust, efficient, and reliable operating systems and concurrent programs. Solutions range from low-level interrupt control and atomic operations to high-level mutexes and monitors — each with its own trade-offs.

By mastering these concepts, developers and engineers can build systems that deliver performance, safety, and efficiency — even under heavy concurrent loads.

Kaashiv Infotech Offers Networking Course, Cyber Security Course, Cloud Computing Course Visit Their Website www.kaashivinfotech.com.