All-in-one management client for PostgreSQL Database – dbForge Studio for PostgreSQL

Devart introduces the best postgresql client for windows, A windows based GUI tool used to access the PostgreSQL and a handy tool for PostgreSQL database development and management. Below are some of the features of dbForge Studio,

Table Of Content

- Allow users to create, develop, and execute queries

- Edit and modify the code based on their requirements with the help of user-friendly interface.

- Generate reports

- Special wizards to import and export data from postgresql

- Build pivot tables

- Creating master-detail relations

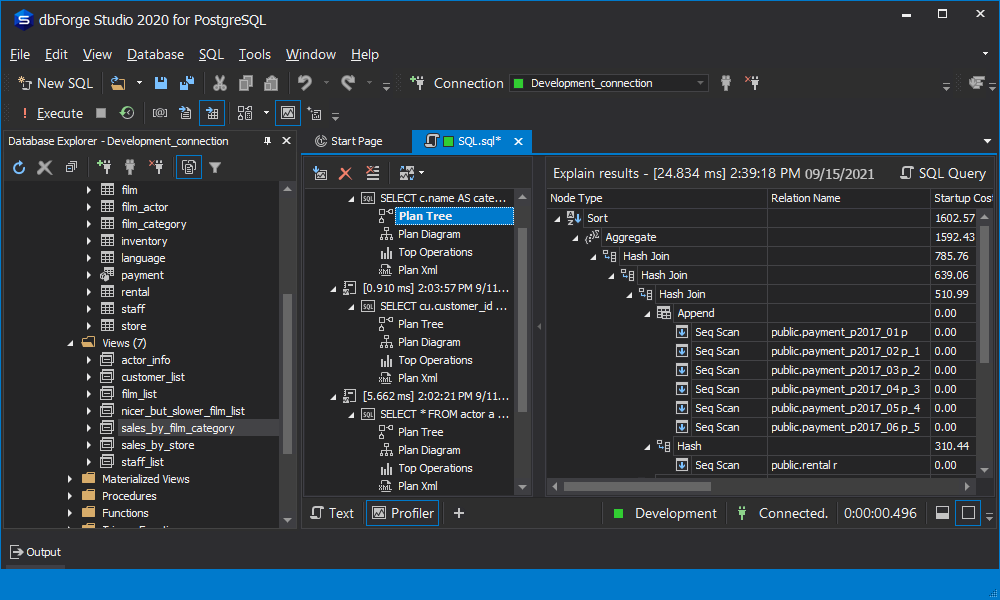

Query Profiler in dbForge Studio for PostgreSQL –

Query performance issue is defined as the queries executed on the source database system on performs too slow process in accessing a virtual table. Basically, Query performance relies on the design of the database and appropriate selection of indexes during fetching of data. The role of DBA is to improve the Index of the database, by analysing the poor performance queries.

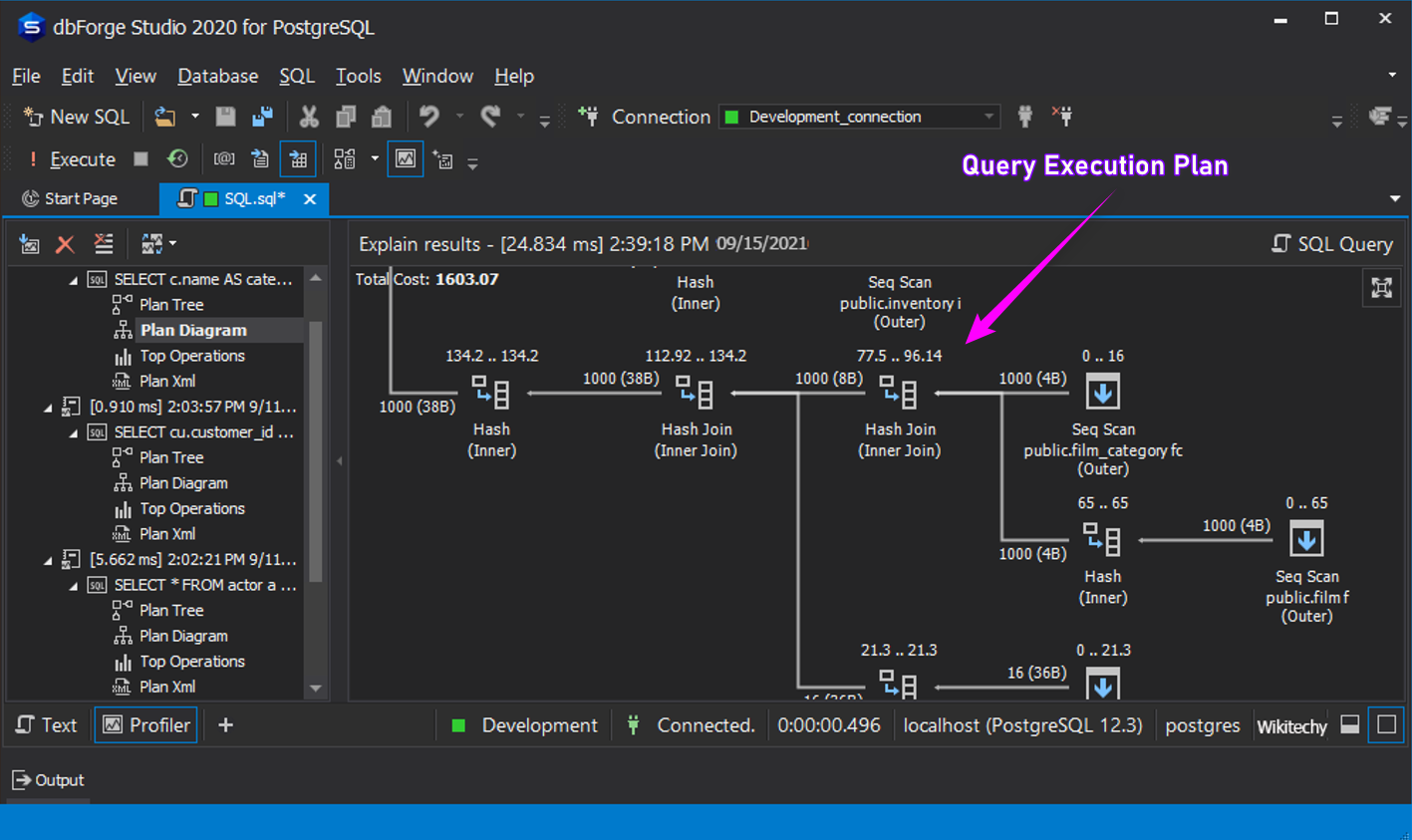

A. Query Execution Plan :

A well-designed application may still experience performance problems(in fetching data) if the SQL code is poorly constructed. Application design Construction and SQL Query problems may cause most of the performance issues in the properly designed databases. The key factor to tune SQL is to minimize the search path that database uses to find the data. Manual intervention in analysing these facts is not an easy task. dbForge Studio provides query profiler which suggest you the performance constraints and provides an insight of execution time of the queries using Query Execution Plan.

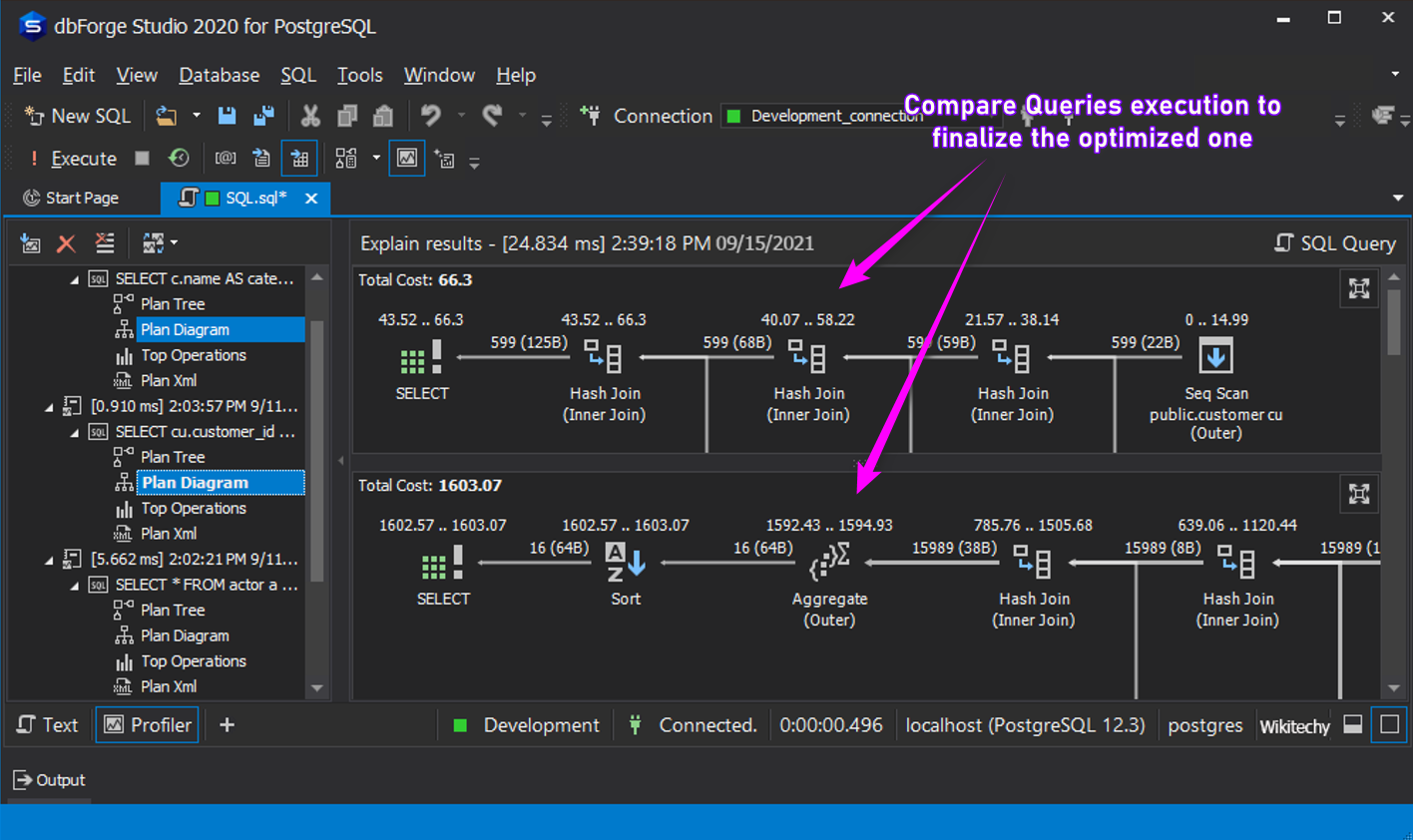

B. Compare Queries for Performance Improvement Suggestion :

The effect on the workload performance is the actual loading of the data into memory from the database for each cycle of operation and managing the intermediate data sets. No tool provides the option of comparing 2 queries to suggest you the best query to use. dbforge provides a fantastic feature of comparing 2 Queries execution plan. This provide an instant suggestion to the DBA to finalize which is right and which is wrong.

C. Memory Utilization Insights :

dbforge Studio addresses/identify the performance bottleneck such as,

- Large I/O cycles – Huge Input and output cycles hurts the performance of the database

- High CPU consumption – Due to large I/O cycle, CPU will be consumed more. This hurts the users entry and exit in accessing the database/application.

- High memory utilization – Due to large I/O cycle, Memory utilization will be more.

- Heavy computes

- Long waits of execution (from a perspective of time)

- Lots of temporary storage

D. Plan Tree :

The basis for measuring query performance in the postgresql database environment is the time from the submission of a query to the moment the results of the query are returned. A database query has two important measurements:

- The length of time from the moment of the submission of the query to the time when the first row/record is returned to the end user

- The length of time from the submission of the query until the row is returned

Plan tree offers the best solution in giving a clear insight of “What is What”. It clearly provides you the details on,

- Order of execution.

- Performance details of each operations.

Final Touch : Here is some the tricky suggestion to improve the performance of the query execution,

- Load optimization in the postgresql database:A cache can be useful to minimize the load on the underlying system. By defining a cache, fewer queries are executed on the old system.

- Consistent reporting:A cache is also useful if a user wants to see the same report results when running a report several times for a specific period of time (day, week, or month). This is typically true for users of reports because it can be quite confusing when the same report returns different results every time it’s run. In this case, a cache might be necessary if the contents of the underlying database is constantly being updated.

- Source availability:If the underlying database is not always available, a periodically refreshed cache might enable a 24×7 operation. For example, the underlying system might be an old production system that starts at 6 a.m. and is shut down at 8 p.m., while the virtual table defined on it offers 24×7 availability. A cache can be the solution.