How to migrate from Elasticsearch 1.7 to 6.8 with zero downtime

My last task at BigPanda was to upgrade an existing service that was using Elasticsearch version 1.7 to a more moderen Elasticsearch version, 6.8.1.

Table Of Content

- Chapter 1 — What’s in it for me?

- Chapter 2 — The constraints

- Chapter 3 — Problem solving and thinking of a plan

- Zero bugs

- Recovery plan

- Zero downtime migration

- Chapter 4 — The plan

- Where should I start?

- The code

- Chapter 5 — The mapping explosion problem

- Nested documents solution

- Avoiding nested documents

- Chapter 6 — The migration process

- How can we migrate the data?

- Conclusion

In this post, i will be able to share how we migrated from Elasticsearch 1.6 to 6.8 with harsh constraints like zero downtime, no data loss, and 0 bugs. I’ll also provide you with a script that does the migration for you.

This post contains 6 chapters (and one is optional):

What’s in it for me? –> What were the new features that led us to upgrade our version?

The constraints –> What were our business requirements?

Problem solving –> How did we address the constraints?

Moving forward –> The plan.

[Optional chapter] –> How did we handle the infamous mapping explosion problem?Finally –> the way to do data migration between clusters.

Chapter 1 — What’s in it for me?

What benefits were we expecting to unravel by upgrading our data store?

There were a few of reasons:

- Performance and stability issues — We were experiencing an enormous number of outages with long MTTR that caused us tons of headaches. This was reflected in frequent high latencies, high CPU usage, and more issues.

- Non-existent support in old Elasticsearch versions — We were missing some operative knowledge in Elasticsearch, and once we looked for outside consulting we were encouraged to migrate forward to receive support.

- Dynamic mappings in our schema — Our current schema in Elasticsearch 1.7 used a feature called dynamic mappings that made our cluster explode multiple times. So we wanted to deal with this issue.

- Poor visibility on our existing cluster — We wanted a far better view under the hood and saw that later versions had great metrics exporting tools.

Chapter 2 — The constraints

- ZERO downtime migration — we’ve active users on our system, and that we couldn’t afford for the system to be down while we were migrating.

- Recovery plan — We couldn’t afford to “lose” or “corrupt” data, regardless of the value . So we would have liked to organize a recovery plan just in case our migration failed.

- Zero bugs — We couldn’t change existing search functionality for end-users.

Chapter 3 — Problem solving and thinking of a plan

Let’s tackle the constraints from the only to the foremost difficult:

Zero bugs

In order to deal with this requirement, I studied all the possible requests the service receives and what its outputs were. Then I added unit-tests where needed.

In addition, I added multiple metrics (to the Elasticsearch Indexer and therefore the new Elasticsearch Indexer ) to trace latency, throughput, and performance, which allowed me to validate that we only improved them.

Recovery plan

This means that I needed to deal with the subsequent situation: I deployed the new code to production and stuff wasn’t working needless to say . What am i able to do about it then

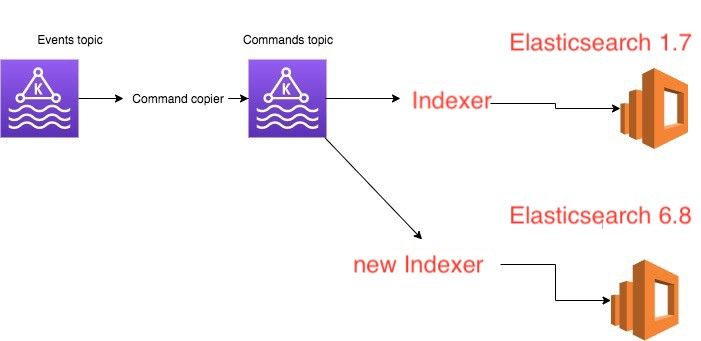

Since i used to be working during a service that used event-sourcing, I could add another listener (diagram attached below) and begin writing to a replacement Elasticsearch cluster without affecting production status

Zero downtime migration

The current service is in live mode and can’t be “deactivated” for periods longer than 5–10 minutes. The trick to getting this right is this:

- Store a log of all the actions your service is handling (we use Kafka in production)

- Start the migration process offline (and keep track of the offset before you started the migration)

- When the migration ends, start the new service against the log with the recorded offset and catch up the lag

- When the lag finishes, change your frontend to question against the new service and you’re done

Chapter 4 — The plan

Our current service uses the subsequent architecture (based on message passing in Kafka):

- Event topic contains events produced by other applications (for example, UserId 3 created)

- Command topic contains the interpretation of those events into specific commands employed by this application (for example: Add userId 3)

- Elasticsearch 1.7 — The datastore of the command Topic read by the Elasticsearch Indexer.

We planned to feature another consumer (new Elasticsearch Indexer) to the command topic, which can read an equivalent exact messages and write them in parallel to Elasticsearch 6.8.

Where should I start?

To be honest, I considered myself a newbie Elasticsearch user. To feel confident to perform this task, I had to believe the simplest thanks to approach this subject and learn it. a couple of things that helped were:

- Documentation — It’s an insanely useful resource for everything Elasticsearch. Take the time to read it and take notes (don’t miss: Mapping and QueryDsl).

- HTTP API — everything under CAT API. This was super useful to debug things locally and see how Elasticsearch responds (don’t miss: cluster health, cat indices, search, delete index).

- Metrics (❤️) — From the primary day, we configured a shiny new dashboard with many cool metrics (taken from elasticsearch-exporter-for-Prometheus) that helped and pushed us to know more about Elasticsearch.

The code

Our codebase was employing a library called elastic4s and was using the oldest release available within the library — a very good reason to migrate! therefore the very first thing to try to to was just to migrate versions and see what broke.

There are a couple of tactics on the way to do that code migration. The tactic we chose was to undertake and restore existing functionality first within the new Elasticsearch version without re-writing the all code from the beginning . In other words, to succeed in existing functionality but on a more moderen version of Elasticsearch.

Luckily for us, the code already contained almost full testing coverage so our task was much much simpler, which took around 2 weeks of development time.

It’s important to note that, if that wasn’t the case, we would have had to invest some time in filling that coverage up. Only then would we be able to migrate since one of our constraints was to not break existing functionality.

Chapter 5 — The mapping explosion problem

Let’s describe our use-case in more detail. This is our model:

[pastacode lang=”python” manual=”class%20InsertMessageCommand(tags%3A%20Map%5BString%2CString%5D)%0A%0A” message=”” highlight=”” provider=”manual”/]And for example, an instance of this message would be:

[pastacode lang=”python” manual=”new%20InsertMessageCommand(Map(%22name%22-%3E%22dor%22%2C%22lastName%22-%3E%22sever%22))” message=”” highlight=”” provider=”manual”/]And given this model, we needed to support the following query requirements:

- Query by value

- Query by tag name and value

The way this was modeled in our Elasticsearch 1.7 schema was using a dynamic template schema (since the tag keys are dynamic, and cannot be modeled in advanced).

The dynamic template caused us multiple outages due to the mapping explosion problem, and the schema looked like this:

[pastacode lang=”python” manual=”curl%20-X%20PUT%20%22localhost%3A9200%2F_template%2Fmy_template%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d%20’%0A%7B%0A%20%20%20%20%22index_patterns%22%3A%20%5B%0A%20%20%20%20%20%20%20%20%22your-index-names*%22%0A%20%20%20%20%5D%2C%0A%20%20%20%20%22mappings%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%22_doc%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22dynamic_templates%22%3A%20%5B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22tags%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22mapping%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22type%22%3A%20%22text%22%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%7D%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22path_match%22%3A%20%22actions.tags.*%22%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%5D%0A%20%20%20%20%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%7D%2C%0A%20%20%20%20%22aliases%22%3A%20%7B%7D%0A%7D’%20%20%0A%0Acurl%20-X%20PUT%20%22localhost%3A9200%2Fyour-index-names-1%2F_doc%2F1%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%22actions%22%3A%20%7B%0A%20%20%20%20%22tags%22%20%3A%20%7B%0A%20%20%20%20%20%20%20%20%22name%22%3A%20%22John%22%2C%0A%20%20%20%20%20%20%20%20%22lname%22%20%3A%20%22Smith%22%0A%20%20%20%20%7D%0A%20%20%7D%0A%7D%0A’%0A%0Acurl%20-X%20PUT%20%22localhost%3A9200%2Fyour-index-names-1%2F_doc%2F2%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%22actions%22%3A%20%7B%0A%20%20%20%20%22tags%22%20%3A%20%7B%0A%20%20%20%20%20%20%20%20%22name%22%3A%20%22Dor%22%2C%0A%20%20%20%20%20%20%20%20%22lname%22%20%3A%20%22Sever%22%0A%20%20%7D%0A%7D%0A%7D%0A’%0A%0Acurl%20-X%20PUT%20%22localhost%3A9200%2Fyour-index-names-1%2F_doc%2F3%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%22actions%22%3A%20%7B%0A%20%20%20%20%22tags%22%20%3A%20%7B%0A%20%20%20%20%20%20%20%20%22name%22%3A%20%22AnotherName%22%2C%0A%20%20%20%20%20%20%20%20%22lname%22%20%3A%20%22AnotherLastName%22%0A%20%20%7D%0A%7D%0A%7D%0A'” message=”” highlight=”” provider=”manual”/] [pastacode lang=”python” manual=”curl%20-X%20GET%20%22localhost%3A9200%2F_search%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%20%20%22query%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%22match%22%20%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%22actions.tags.name%22%20%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22query%22%20%3A%20%22John%22%0A%20%20%20%20%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%7D%0A%7D%0A’%0A%23%20returns%201%20match(doc%201)%0A%0A%0Acurl%20-X%20GET%20%22localhost%3A9200%2F_search%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%20%20%22query%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%22match%22%20%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%22actions.tags.lname%22%20%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22query%22%20%3A%20%22John%22%0A%20%20%20%20%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%7D%0A%7D%0A’%0A%23%20returns%20zero%20matches%0A%0A%23%20search%20by%20value%0Acurl%20-X%20GET%20%22localhost%3A9200%2F_search%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%20%20%22query%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%22query_string%22%20%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%22fields%22%3A%20%5B%22actions.tags.*%22%20%5D%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%22query%22%20%3A%20%22Dor%22%0A%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%7D%0A%7D%0A'” message=”” highlight=”” provider=”manual”/]Nested documents solution

Our first instinct in solving the mapping explosion problem was to use nested documents.

We read the nested data type tutorial within the Elastic docs and defined the subsequent schema and queries:

[pastacode lang=”python” manual=”curl%20-X%20PUT%20%22localhost%3A9200%2Fmy_index%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%20%20%20%20%20%20%22mappings%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%22_doc%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%22properties%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%22tags%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22type%22%3A%20%22nested%22%20%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%7D%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%0A%20%20%20%20%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%7D%0A%7D%0A’%0A%0Acurl%20-X%20PUT%20%22localhost%3A9200%2Fmy_index%2F_doc%2F1%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%22tags%22%20%3A%20%5B%0A%20%20%20%20%7B%0A%20%20%20%20%20%20%22key%22%20%3A%20%22John%22%2C%0A%20%20%20%20%20%20%22value%22%20%3A%20%20%22Smith%22%0A%20%20%20%20%7D%2C%0A%20%20%20%20%7B%0A%20%20%20%20%20%20%22key%22%20%3A%20%22Alice%22%2C%0A%20%20%20%20%20%20%22value%22%20%3A%20%20%22White%22%0A%20%20%20%20%7D%0A%20%20%5D%0A%7D%0A’%0A%0A%0A%23%20Query%20by%20tag%20key%20and%20value%0Acurl%20-X%20GET%20%22localhost%3A9200%2Fmy_index%2F_search%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%22query%22%3A%20%7B%0A%20%20%20%20%22nested%22%3A%20%7B%0A%20%20%20%20%20%20%22path%22%3A%20%22tags%22%2C%0A%20%20%20%20%20%20%22query%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%22bool%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%22must%22%3A%20%5B%0A%20%20%20%20%20%20%20%20%20%20%20%20%7B%20%22match%22%3A%20%7B%20%22tags.key%22%3A%20%22Alice%22%20%7D%7D%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%7B%20%22match%22%3A%20%7B%20%22tags.value%22%3A%20%20%22White%22%20%7D%7D%20%0A%20%20%20%20%20%20%20%20%20%20%5D%0A%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%7D%0A%20%20%20%20%7D%0A%20%20%7D%0A%7D%0A’%0A%0A%23%20Returns%201%20document%0A%0A%0Acurl%20-X%20GET%20%22localhost%3A9200%2Fmy_index%2F_search%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%22query%22%3A%20%7B%0A%20%20%20%20%22nested%22%3A%20%7B%0A%20%20%20%20%20%20%22path%22%3A%20%22tags%22%2C%0A%20%20%20%20%20%20%22query%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%22bool%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%22must%22%3A%20%5B%0A%20%20%20%20%20%20%20%20%20%20%20%20%7B%20%22match%22%3A%20%7B%20%22tags.value%22%3A%20%20%22Smith%22%20%7D%7D%20%0A%20%20%20%20%20%20%20%20%20%20%5D%0A%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%7D%0A%20%20%20%20%7D%0A%20%20%7D%0A%7D%0A’%0A%0A%23%20Query%20by%20tag%20value%0A%23%20Returns%201%20result” message=”” highlight=”” provider=”manual”/]And this solution worked. However, once we tried to insert real customer data we saw that the amount of documents in our index increased by around 500 times.

We considered the subsequent problems and went on to seek out a far better solution:

The amount of documents we had in our cluster was around 500 million documents. This meant that, with the new schema, we were getting to reach 2 hundred fifty billion documents (that’s 250,000,000,000 documents 😱).

We read this specialized blog post — https://blog.gojekengineering.com/elasticsearch-the-trouble-with-nested-documents-e97b33b46194 which highlights that nested documents can cause high latency in queries and heap usage problems.

Testing — Since we were converting 1 document within the old cluster to an unknown number of documents within the new cluster, it might be much harder to trace if the migration process worked with none data loss. If our conversion was 1:1, we could assert that the count within the old cluster equalled the count within the new cluster.

Avoiding nested documents

The real trick during this was to specialise in what supported queries we were running: search by tag value, and search by tag key and value.

The first query doesn’t require nested documents since it works on one field. For the latter, we did the subsequent trick. We created a field that contains the mixture of the key and therefore the value. Whenever a user queries on a key, value match, we translate their request to the corresponding text and query against that field.

Example:

[pastacode lang=”python” manual=”curl%20-X%20PUT%20%22localhost%3A9200%2Fmy_index_2%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%20%20%22mappings%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%22_doc%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%22properties%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22tags%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22type%22%3A%20%22object%22%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22properties%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22keyToValue%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22type%22%3A%20%22keyword%22%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%7D%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22value%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%22type%22%3A%20%22keyword%22%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%20%20%20%20%7D%0A%20%20%20%20%7D%0A%7D%0A’%0A%0A%0Acurl%20-X%20PUT%20%22localhost%3A9200%2Fmy_index_2%2F_doc%2F1%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%22tags%22%20%3A%20%5B%0A%20%20%20%20%7B%0A%20%20%20%20%20%20%22keyToValue%22%20%3A%20%22John%3ASmith%22%2C%0A%20%20%20%20%20%20%22value%22%20%3A%20%22Smith%22%0A%20%20%20%20%7D%2C%0A%20%20%20%20%7B%0A%20%20%20%20%20%20%22keyToValue%22%20%3A%20%22Alice%3AWhite%22%2C%0A%20%20%20%20%20%20%22value%22%20%3A%20%22White%22%0A%20%20%20%20%7D%0A%20%20%5D%0A%7D%0A’%0A%0A%23%20Query%20by%20key%2Cvalue%0A%23%20User%20queries%20for%20key%3A%20Alice%2C%20and%20value%20%3A%20White%20%2C%20we%20then%20query%20elastic%20with%20this%20query%3A%0A%0Acurl%20-X%20GET%20%22localhost%3A9200%2Fmy_index_2%2F_search%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%22query%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%22bool%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%22must%22%3A%20%5B%20%7B%20%22match%22%3A%20%7B%20%22tags.keyToValue%22%3A%20%22Alice%3AWhite%22%20%7D%7D%5D%0A%20%20%7D%7D%7D%0A’%0A%0A%23%20Query%20by%20value%20only%0Acurl%20-X%20GET%20%22localhost%3A9200%2Fmy_index_2%2F_search%3Fpretty%22%20-H%20’Content-Type%3A%20application%2Fjson’%20-d’%0A%7B%0A%20%20%22query%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%22bool%22%3A%20%7B%0A%20%20%20%20%20%20%20%20%20%20%22must%22%3A%20%5B%20%7B%20%22match%22%3A%20%7B%20%22tags.value%22%3A%20%22White%22%20%7D%7D%5D%0A%20%20%7D%7D%7D%0A'” message=”” highlight=”” provider=”manual”/]Chapter 6 — The migration process

We planned to migrate about 500 million documents with zero downtime. to try to to that we needed:

- A strategy on the way to transfer data from the old Elastic to the new Elasticsearch

- A strategy on the way to close the lag between the beginning of the migration and therefore the end of it

And our two options in closing the lag:

- Our messaging system is Kafka based. We could have just taken the present offset before the migration started, and after the migration ended, start consuming from that specific offset. This solution requires some manual tweaking of offsets and a few other stuff, but will work.

- Another approach to solving this issue was to start out consuming messages from the start of the subject in Kafka and make our actions on Elasticsearch idempotent — meaning, if the change was “applied” already, nothing would change in Elastic store.

The requests made by our service against Elastic were already idempotent, so we elect option 2 because it required zero manual work (no got to take specific offsets, then set them afterward during a new consumer group).

How can we migrate the data?

These were the choices we thought of:

- If our Kafka contained all messages from the start of your time , we could just play from the beginning and therefore the end state would be equal. But since we apply retention to out topics, this wasn’t an option.

- Dump messages to disk then ingest them to Elastic directly – This solution looked quite weird. Why store them in disk rather than just writing them on to Elastic?

- Transfer messages between old Elastic to new Elastic — This meant, writing some kind of “script” (did anyone say Python? 😃) which will hook up with the old Elasticsearch cluster, query for items, transform them to the new schema, and index them within the cluster.

We choose the last option. These were the planning choices we had in mind:

- Let’s not attempt to believe error handling unless we’d like to. Let’s attempt to write something super simple, and if errors occur, let’s attempt to address them. within the end, we didn’t got to address this issue since no errors occurred during the migration.

- It’s a one-off operation, so whatever works first / KISS.

- Metrics — Since the migration processes can take hours to days, we wanted the power from day 1 to be ready to monitor the error count and to trace the present progress and replica rate of the script.

We thought long and hard and choose Python as our weapon of choice. The final version of the code is below:

[pastacode lang=”python” manual=”dictor%3D%3D0.1.2%20-%20to%20copy%20and%20transform%20our%20Elasticsearch%20documentselasticsearch%3D%3D1.9.0%20-%20to%20connect%20to%20%22old%22%20Elasticsearchelasticsearch6%3D%3D6.4.2%20-%20to%20connect%20to%20the%20%22new%22%20Elasticsearchstatsd%3D%3D3.3.0%20-%20to%20report%20metrics” message=”” highlight=”” provider=”manual”/] [pastacode lang=”python” manual=”from%20elasticsearch%20import%20Elasticsearch%0Afrom%20elasticsearch6%20import%20Elasticsearch%20as%20Elasticsearch6%0Aimport%20sys%0Afrom%20elasticsearch.helpers%20import%20scan%0Afrom%20elasticsearch6.helpers%20import%20parallel_bulk%0Aimport%20statsd%0A%0AES_SOURCE%20%3D%20Elasticsearch(sys.argv%5B1%5D)%0AES_TARGET%20%3D%20Elasticsearch6(sys.argv%5B2%5D)%0AINDEX_SOURCE%20%3D%20sys.argv%5B3%5D%0AINDEX_TARGET%20%3D%20sys.argv%5B4%5D%0AQUERY_MATCH_ALL%20%3D%20%7B%22query%22%3A%20%7B%22match_all%22%3A%20%7B%7D%7D%7D%0ASCAN_SIZE%20%3D%201000%0ASCAN_REQUEST_TIMEOUT%20%3D%20’3m’%0AREQUEST_TIMEOUT%20%3D%20180%0AMAX_CHUNK_BYTES%20%3D%2015%20*%201024%20*%201024%0ARAISE_ON_ERROR%20%3D%20False%0A%0A%0Adef%20transform_item(item%2C%20index_target)%3A%0A%20%20%20%20%23%20implement%20your%20logic%20transformation%20here%0A%20%20%20%20transformed_source_doc%20%3D%20item.get(%22_source%22)%0A%20%20%20%20return%20%7B%22_index%22%3A%20index_target%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%22_type%22%3A%20%22_doc%22%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%22_id%22%3A%20item%5B’_id’%5D%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%22_source%22%3A%20transformed_source_doc%7D%0A%0A%0Adef%20transformedStream(es_source%2C%20match_query%2C%20index_source%2C%20index_target%2C%20transform_logic_func)%3A%0A%20%20%20%20for%20item%20in%20scan(es_source%2C%20query%3Dmatch_query%2C%20index%3Dindex_source%2C%20size%3DSCAN_SIZE%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20timeout%3DSCAN_REQUEST_TIMEOUT)%3A%0A%20%20%20%20%20%20%20%20yield%20transform_logic_func(item%2C%20index_target)%0A%0A%0Adef%20index_source_to_target(es_source%2C%20es_target%2C%20match_query%2C%20index_source%2C%20index_target%2C%20bulk_size%2C%20statsd_client%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20logger%2C%20transform_logic_func)%3A%0A%20%20%20%20ok_count%20%3D%200%0A%20%20%20%20fail_count%20%3D%200%0A%20%20%20%20count_response%20%3D%20es_source.count(index%3Dindex_source%2C%20body%3Dmatch_query)%0A%20%20%20%20count_result%20%3D%20count_response%5B’count’%5D%0A%20%20%20%20statsd_client.gauge(stat%3D’elastic_migration_document_total_count%2Cindex%3D%7B0%7D%2Ctype%3Dsuccess’.format(index_target)%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20value%3Dcount_result)%0A%20%20%20%20with%20statsd_client.timer(‘elastic_migration_time_ms%2Cindex%3D%7B0%7D’.format(index_target))%3A%0A%20%20%20%20%20%20%20%20actions_stream%20%3D%20transformedStream(es_source%2C%20match_query%2C%20index_source%2C%20index_target%2C%20transform_logic_func)%0A%20%20%20%20%20%20%20%20for%20(ok%2C%20item)%20in%20parallel_bulk(es_target%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20chunk_size%3Dbulk_size%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20max_chunk_bytes%3DMAX_CHUNK_BYTES%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20actions%3Dactions_stream%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20request_timeout%3DREQUEST_TIMEOUT%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20raise_on_error%3DRAISE_ON_ERROR)%3A%0A%20%20%20%20%20%20%20%20%20%20%20%20if%20not%20ok%3A%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20logger.error(%22got%20error%20on%20index%20%7B%7D%20which%20is%20%3A%20%7B%7D%22.format(index_target%2C%20item))%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20fail_count%20%2B%3D%201%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20statsd_client.incr(‘elastic_migration_document_count%2Cindex%3D%7B0%7D%2Ctype%3Dfailure’.format(index_target)%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%201)%0A%20%20%20%20%20%20%20%20%20%20%20%20else%3A%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20ok_count%20%2B%3D%201%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20statsd_client.incr(‘elastic_migration_document_count%2Cindex%3D%7B0%7D%2Ctype%3Dsuccess’.format(index_target)%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%201)%0A%0A%20%20%20%20return%20ok_count%2C%20fail_count%0A%0A%0Astatsd_client%20%3D%20statsd.StatsClient(host%3D’localhost’%2C%20port%3D8125)%0A%0Aif%20__name__%20%3D%3D%20%22__main__%22%3A%0A%20%20%20%20index_source_to_target(ES_SOURCE%2C%20ES_TARGET%2C%20QUERY_MATCH_ALL%2C%20INDEX_SOURCE%2C%20INDEX_TARGET%2C%20BULK_SIZE%2C%0A%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20%20statsd_client%2C%20transform_item)” message=”” highlight=”” provider=”manual”/]Conclusion

Migrating data during a live production system may be a complicated task that needs tons of attention and careful planning. i like to recommend taking the time to figure through the steps listed above and find out what works best for your needs.

As a rule of thumb, always attempt to reduce your requirements the maximum amount as possible. for instance , may be a zero downtime migration required? are you able to afford data-loss?

Upgrading data stores is typically a marathon and not a sprint, so take a deep breath and check out to enjoy the ride.

- The whole process listed above took me around 4 months of labor

- All of the Elasticsearch examples that appear during this post are tested against version 6.8.1